The Desktop AI Revolution: Rethinking the Role of Arm CPUs in Local RAG Systems

「 Rethinking the role of CPUs in AI: A practical RAG implementation on DGX Spark 」

The Bottleneck of Enterprise Search

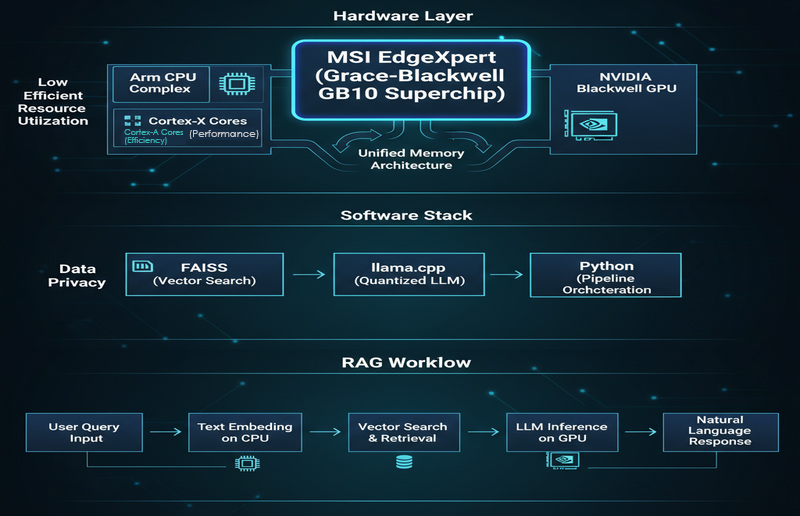

Minimum Viable Architecture for Local RAG

- Data Cleaning: Converting PDFs, Word docs, or web pages into a unified format and performing chunking.

- Embedding: Transforming each data chunk into a vector using the CPU.

- Vector Database: Indexing and storing these vectors using tools like FAISS.

- Retrieval: Converting user queries into embeddings to find the Top-K most relevant document fragments.

- Generation: Feeding the evidence and query into a local LLM (e.g., llama.cpp) to generate a cited response.

(Optimized for interactive, low-latency applications)

(Net increase of approx. 10 GB)

(Indicates successful avoidance of large-scale data replication)

Surprise #1: CPU Superiority in Embedding

- Low Latency: Embedding latency on the Arm CPU is approximately 70–90 ms, which is ideal for interactive, real-time queries.

- Efficiency: Using the CPU avoids the overhead of GPU scheduling, startup time, and data transfer via PCIe.

- Stability: It ensures a stable "Query → Retrieval → Response" loop within a timeframe acceptable to users.

Surprise #2: The Power of Unified Memory

- Resource Efficiency: Implementation data shows DRAM usage rising from an idle 3.5 GB to a peak of 14 GB during RAG operations, indicating high resource utilization without redundancy.

- Zero-Copy Transition: The transition from CPU embedding to GPU generation causes almost no spike in memory usage, confirming that massive data replication is avoided.

Surprise #3: Eliminating AI Hallucinations

- Comparison Test: In tests involving Raspberry Pi GPIO definitions, non-RAG models gave confident but contradictory answers.

- Verifiable Output: With RAG, the system provided answers consistent with official documentation, citing specific chapters and tables.

Surprise #4: Desktop AI is Deployment-Ready

- Data Sovereignty: All data remains within the intranet, ensuring compliance.

- Scalability: Systems can be expanded to include access controls, audit logs, and multi-language retrieval.

Actionable Advice: Building Your PoC

- Define Scope: Select 3–5 frequently asked, clear-cut questions (e.g., BIOS parameters, SOPs).

- Prepare Data: Curate 50–200 representative documents that are accurate and parsable.

- Indexing: Perform conservative chunking (300–800 tokens) and build a FAISS index.

- Evaluate: Measure embedding latency (target < 100ms) and retrieval accuracy.

- Enforce Citations: Configure the prompt to require source citations to prevent fabrication.

FAQ: Technical Clarifications

Q1: Why is the CPU faster for embedding?

A: Because user queries are typically "small batch" and "short." The CPU avoids the high overhead of GPU scheduling and PCIe data transfer, resulting in lower end-to-end latency.Q2: What is the benefit of Unified Memory?

A: It minimizes the need to copy large amounts of data between the CPU and GPU, ensuring a smooth handover from the retrieval phase to the generation phase.Q3: Is a GPU mandatory for local RAG?

A: Not necessarily for the retrieval phase, which the CPU handles efficiently. However, a GPU provides significant advantages during the final "generation" phase if using larger models or requiring higher throughput.